In short: Anthropic has finalized a groundbreaking agreement to secure approximately 3.5 gigawatts of next-generation Google TPU compute capacity through Broadcom, starting in 2027. This deal marks Anthropic's largest infrastructure commitment to date, coinciding with a revenue run rate that has soared past $30 billion, up from approximately $9 billion at the end of 2025.

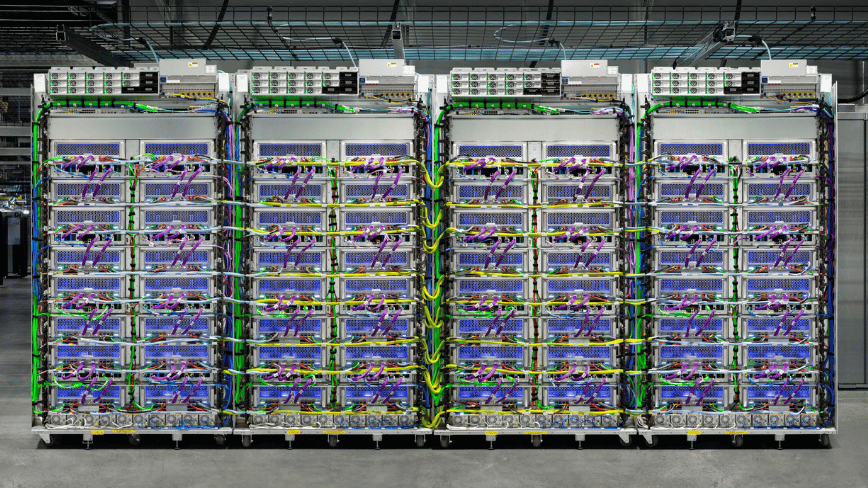

On April 6, 2026, Anthropic announced its strategic partnership with Google and Broadcom, securing substantial gigawatt-scale compute capacity. The agreement provides Anthropic access to 3.5 gigawatts of Google tensor processing unit (TPU) capacity via Broadcom, building upon the existing 1 gigawatt already allocated to the company in 2026.

Krishna Rao, Anthropic’s chief financial officer, emphasized the significance of this deal, describing it as “our most significant compute commitment to date” and indicating a disciplined approach to scaling infrastructure. The majority of this new compute capacity will be based in the United States, extending Anthropic’s previous commitment made in November 2025 to invest $50 billion in American AI computing infrastructure.

Collaborative Infrastructure Strategy

This announcement highlights the collaborative effort between Broadcom, Anthropic, and Google. Broadcom will serve as the intermediary between Google's custom silicon and Anthropic’s training and inference workloads. Additionally, Broadcom has entered into a separate long-term agreement with Google to design and supply future generations of custom TPU chips and to ensure supply agreements for networking components for Google's AI data racks through 2031.

Broadcom is becoming a pivotal player in the AI infrastructure landscape. Under the leadership of CEO Hock Tan, the company is focused on building the silicon and interconnects essential for AI models, rather than developing AI models themselves. Following the announcement, Broadcom's shares surged approximately 3% in after-hours trading, reflecting investor confidence in companies that operate at the foundational layer of AI infrastructure. Analysts predict that Broadcom could generate $21 billion in AI revenue from Anthropic in 2026, potentially doubling to $42 billion in 2027.

The scale of the partnership was foreshadowed by a September 2025 earnings call during which Hock Tan revealed that a significant customer had placed a $10 billion order for custom TPU racks. By December 2025, it was confirmed that Anthropic was the customer, with an additional $11 billion order following shortly after. The April 2026 announcement marks the third phase of this evolving partnership, transitioning from a reported $21 billion commitment to a robust multi-gigawatt infrastructure with a clear delivery timeline.

Revenue Growth and Market Dynamics

The compute deal is contextualized by Anthropic's impressive commercial growth. The company reported that its revenue run rate has exceeded $30 billion, a significant leap from approximately $9 billion at the end of 2025. This rapid growth trajectory, more than tripling in just three months, is attributed to a successful enterprise sales strategy that accelerated after Anthropic's Series G funding round on February 12, 2026, which raised $30 billion at a post-money valuation of $380 billion.

Since the closure of the Series G funding, Anthropic has seen its business customer count exceed 1,000, doubling within a few months, with each customer spending over $1 million annually. This surge in enterprise adoption is the driving force behind the need for expanded compute capacity.

Strategic Multi-Cloud Approach

Anthropic’s infrastructure strategy distinguishes itself through a deliberate multi-vendor chip approach. The AI model Claude is trained and operated on three hardware platforms: Amazon's Trainium chips, Google's TPUs, and Nvidia GPUs. This cross-platform availability enhances commercial viability and technical resilience, allowing Anthropic to switch workloads as needed, protecting against potential disruptions from any single vendor.

Anthropic's relationship with AWS remains essential, with Amazon having invested $8 billion and supporting Project Rainier, a supercomputer cluster expected to expand significantly by the end of 2025. The newly established partnership with Google, now bolstered by the Broadcom deal, complements rather than replaces this foundational relationship.

Commitment to US Infrastructure

The April deal reinforces Anthropic's commitment to American AI infrastructure, following a $50 billion pledge made in November 2025. This infrastructure, initially developed with the UK-based Fluidstack, is set to enhance AI computing capabilities across the United States, with data centers in Texas and New York coming online through 2026.

As AI becomes a critical strategic priority, Anthropic's investments align with broader governmental initiatives aimed at bolstering US-based compute capacity. This alignment marks a significant shift in the AI landscape, as a considerable portion of next-generation AI training capacity is secured within American borders.

Implications for the AI Compute Race

The Anthropic-Google-Broadcom agreement exemplifies a growing trend in the AI sector, where the rapid growth of AI labs has led to compute demands that far exceed their revenue-generating capabilities. This dynamic necessitates substantial financial engineering, as seen in SoftBank’s $40 billion funding for OpenAI and Meta’s $27 billion infrastructure deal with Nebius.

As the compute arms race evolves, AI companies are reassessing their relationships with the services built on their models. Recently, Anthropic has begun to restrict access to Claude via certain third-party frameworks, reflecting the financial pressures of sustaining frontier model inference and the need to make strategic decisions about subsidizing use cases versus explicit pricing.

Broadcom’s evolution from a lesser-known player in AI two years ago to a critical element in the infrastructure supporting prominent AI models like Google’s Gemini and Anthropic’s Claude illustrates a significant transformation within the semiconductor industry. While Nvidia remains a dominant force in AI accelerators, Broadcom’s rise as a preferred partner for hyperscale AI compute represents a pivotal shift in industry dynamics.

Source: TNW | Anthropic News